The Language Model Revolution

Public data has long been public in name but private in practice. Records exist, yet jargon, rigid workflows, and inconsistent formats fence off real access. Unclaimed property makes the problem concrete. To find money that belongs to you, you may need to navigate fifty different state systems, each with its own search page, required fields, and rules for valid queries. Ordinary residents meet exact match inputs, desktop-first forms, and pages that struggle with assistive technologies. Developers can sometimes script around the pain, but most people need a guide, not a schema. Language models flip the interface from syntax to intent. They listen to what people mean, translate that intent into the correct query for each system, and explain next steps in plain English. When access becomes conversational rather than procedural, public data stops feeling like a puzzle and starts working for everyone.

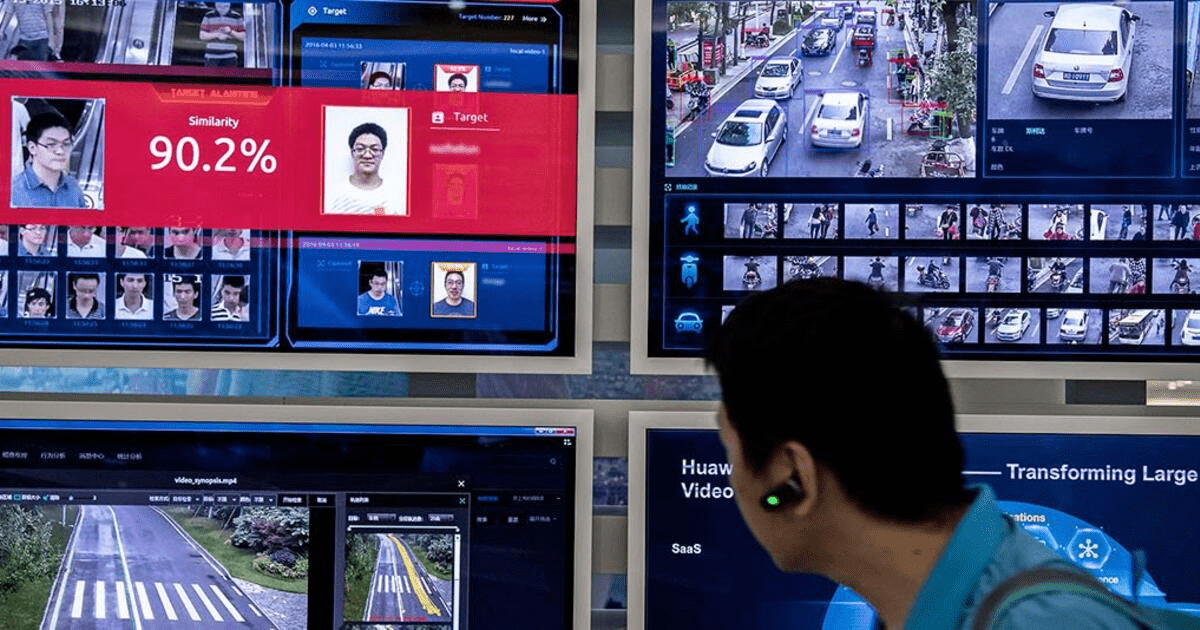

Language models turn fragmented government dashboards and records into clear answers citizens can act on.

The Access Barrier Problem

These portals fail citizens for structural reasons, not just bugs. Interfaces differ by jurisdiction, so the same question requires different fields in different places. Some sites demand perfect spelling while others allow limited fuzziness. Few offer a unified cross-state search, and many were built for large monitors, which complicates mobile use and screen reader navigation. People also lack the background knowledge the sites assume. They do not always know which state to search after years of moving for school or work. They are unsure which property types apply, whether multiple searches are needed, or how to interpret cryptic results. Language adds friction because legal pages are full of terms like escheat, claimant, and remittance that read like riddles. Portals rarely accept plain questions and often ignore name variation, from nicknames to married names to diacritics. The equity cost is real. Time-rich, tech-fluent users eventually succeed; everyone else is far more likely to give up.

Technical Barriers

Each state runs a distinct interface with different required fields and inconsistent search syntax. Many engines are exact matches only. There is no unified cross-state search, mobile layouts lag, and accessibility support is patchy for disabled users.

Knowledge Barriers

People rarely know which states to check, which property types apply, or that multiple searches over life events and name changes may be required. Results arrive without context, making interpretation uncertain.

Language Barriers

Government jargon and formal legal phrasing diverge from how people speak. Portals seldom accept natural language queries, and name variations are poorly handled, hiding valid matches.

Language Model Solutions

Natural Language Understanding

Modern models let someone ask Do I have unclaimed money and receive a valid multi-jurisdiction search without learning a single field name. They capture intent and fill missing structure. If a user says my grandmother passed away in Houston, the system infers a deceased search and offers probate-aware guidance. If someone says I moved from Texas to California, the system proposes checks across both states, plus likely employers and banks. Query expansion turns vague phrases like old bank account into the full set of relevant categories, so partial knowledge does not block discovery.

Semantic Search Capabilities

Vector embeddings capture meaning beyond keywords, surfacing the proper record despite misspellings, nicknames, or transposed characters. Similarity search retrieves near matches without brittle rules. Cross-state concept mapping aligns inconsistent categories behind the scenes. Multilingual support accepts questions and returns explanations in a resident’s preferred language.

Intelligent Guidance

Models explain requirements in plain language, outline documents a claim will need, and clarify why some records are filtered out. They compose a personalised search plan that starts broad, ranks likely matches, and invites a deeper pass where confidence is low. By implementing transformer-based language models, platforms like Claim Notify let people type money from my old job rather than memorise categories such as unpaid wages or final paycheck, making access feel human rather than bureaucratic.

Technical Implementation

Under the hood, the stack pairs learning with grounding. Embedding models generate vectors for names, addresses, and free text. A vector database supports fast similarity lookup, while a normalised schema keeps disparate state exports consistent. Fine-tuning on government terminology trains models to recognise agency labels and map them to unified fields. Retrieval-augmented generation anchors explanations to trusted records, reducing hallucinations and providing citations that the interface can show. Cost stays in check by selecting compact models for dialogue, reserving larger models for tough disambiguation, batching offline backfills, and caching popular queries so the system does not recompute the same advice.

Impact and Future

Putting natural language in front of public data changes outcomes quickly. Search success rises from guesswork around 30 percent to above 85 percent because the system interprets intent and checks multiple jurisdictions at once. Time to the first practical result drops from hours to minutes, and satisfaction climbs as the process feels guided rather than adversarial. Accessibility improves as chat and voice welcome people who struggle with forms or screens. New possibilities follow. The platform can proactively notify a person when a likely match appears. A guided chatbot can walk someone through evidence collection. Voice search helps elderly or disabled users. Claim forms can be prefilled from verified data to reduce abandonment. Predictive outreach can identify communities most likely to benefit, so limited resources reach the right neighbourhoods. Responsible builders also address risk. Grounding and guardrails reduce hallucinations, privacy is protected through data minimisation and encryption, and costs are controlled with model choice and caching. Human reviewers remain in the loop for sensitive decisions.

Democratizing Data Access

Language models are not a novelty here. They are the missing interface that turns raw records into usable information. They translate intent into valid queries, explain results without jargon, and meet people where they are. The goal is not to impress engineers. The goal is to remove friction so anyone can recover what already belongs to them. ClaimNotify shows how citizen-centric design and language models can coexist in production while honouring accuracy, privacy, and cost. The call to action is clear. Civic tech projects should adopt language models with retrieval grounding, multilingual support, and careful privacy safeguards, then measure success by recovered assets and completed claims, not clicks. When natural language becomes the front door to public data, access no longer depends on decoding a form, and more families get back what is already theirs.